The more artificial intelligence enters our lives, the more important become ethics and philosophy. Below are four book recommendations.

AI Ethics

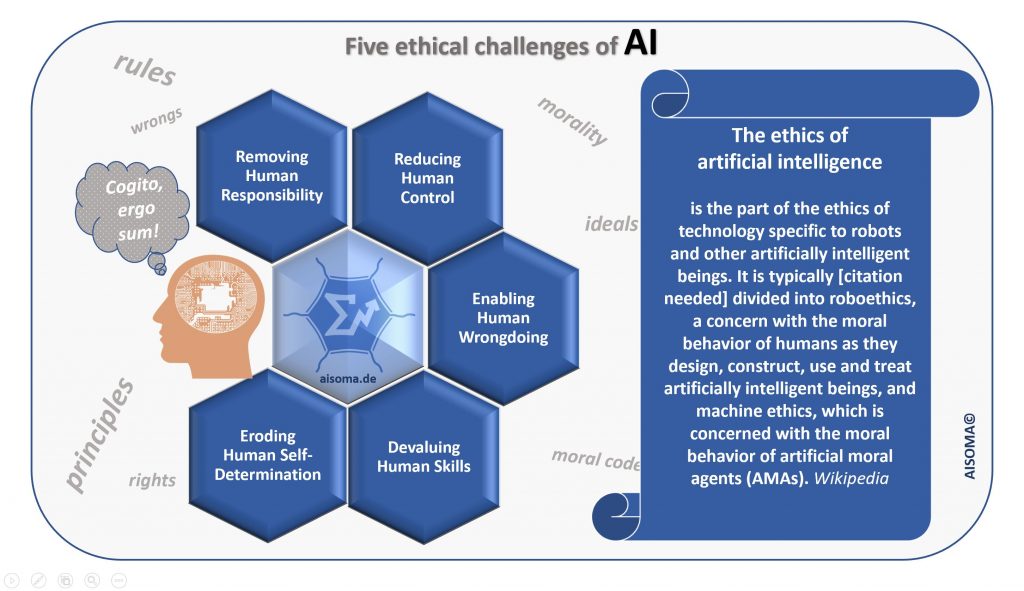

The ethics of artificial intelligence is part of the ethics of technology specific to robots and other artificially intelligent beings. It is typically[citation needed] divided into roboethics, a concern with the moral behavior of humans as they design, construct, use and treat artificially intelligent beings, and machine ethics, which is concerned with the moral behavior of artificial moral agents (AMAs). (Wikipedia)

AI Philosophy

Artificial intelligence has close connections with philosophy because both share several concepts and these include intelligence, action, consciousness, epistemology, and even free will.[1] Furthermore, the technology is concerned with the creation of artificial animals or artificial people (or, at least, artificial creatures) so the discipline is of considerable interest to philosophers.[2] These factors contributed to the emergence of the philosophy of artificial intelligence. Some scholars argue that the AI community’s dismissal of philosophy is detrimental. (Wikipedia)

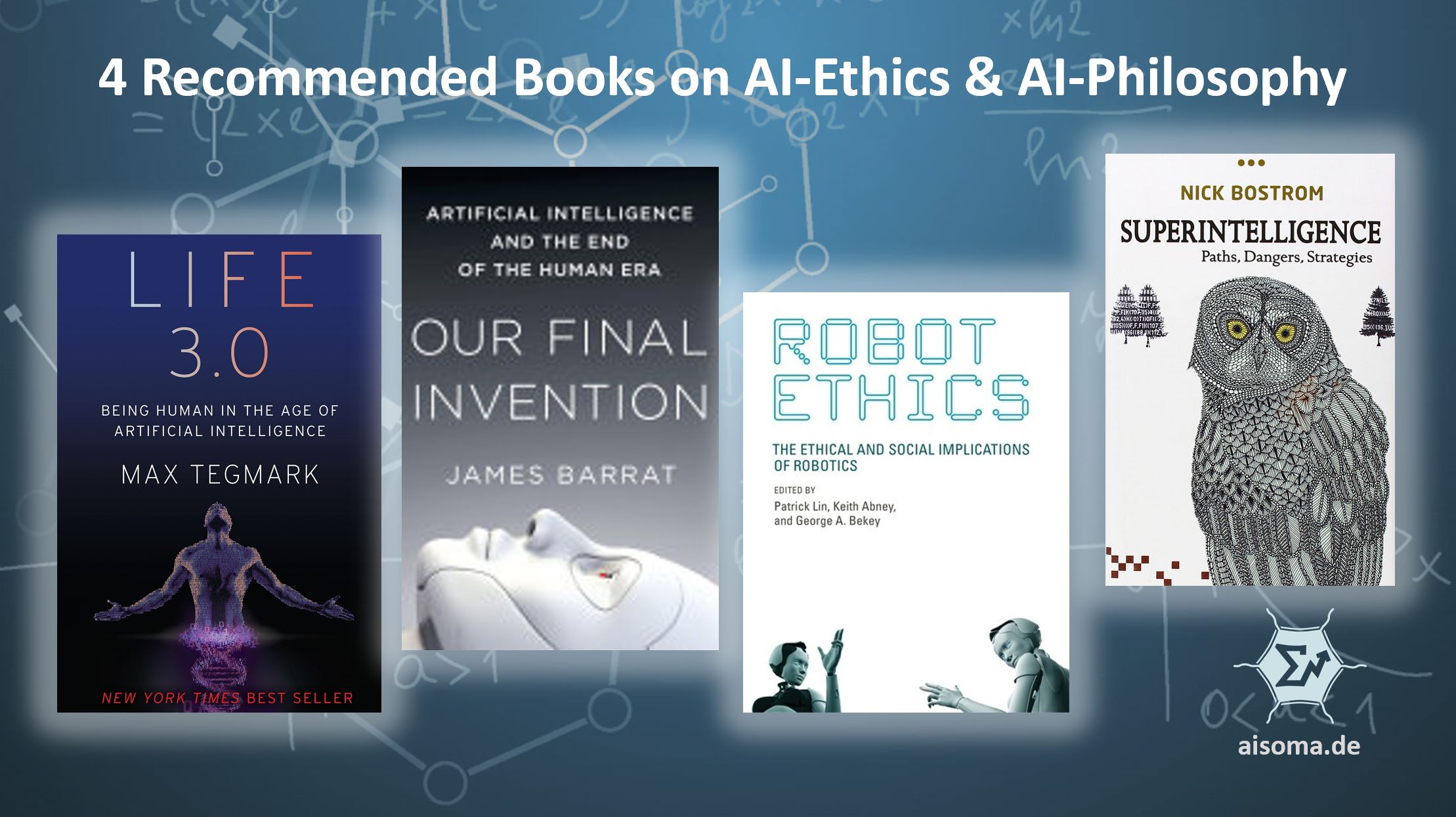

[table id=4 /]

[bctt tweet=”4 Recommended Books on AI Ethics and AI Philosophy. #AI #AIEthics #AIPhilosophy #Books” username=”AISOMA_AG”]

AISOMA

Further reading:

- 1, 2, 3 … AI is coming to get you!

- 5 Variations of AI & 3 essays for the fundamental understanding of AI