This term encompasses all types of machine learning in which the result is unknown, and there is no teacher to train the algorithm. In the case of unsupervised learning, the learning algorithm receives only the input data and is instructed to extract knowledge from this data.

There are basically two types of Unsupervised Learning:

1. Transformation of Records

2. Cluster method

Unsupervised Transformation

These are algorithms that generate a new representation of the data, which is easier to understand for humans or other machine learning algorithms than their original representation. One typical application of the unsupervised transformation is the dimensionality reduction, which can be used to derive a composite representation of a few central features from a higher-dimensional representation of the data with many features. A typical example of dimensionality reduction is the projection on two dimensions to visualize data better and thus better understand it. Another relevant and useful application for unsupervised transformation is finding parts or components that are the core of the data.

An example of this is finding topics in a collection of text documents. The task is to find unknown topics that are mentioned in all documents. Here one tries to find out which topics occur in all documents. Such methods can be useful, for example, to follow discussions on topics such as elections, laws and pop stars.

Cluster Method

Clustering, on the other hand, divides records into separate groups with similar items. As an example, consider uploading images to a social network. To sort their pictures, the website might try to juxtapose pictures with the same person. However, the website does not know who is on which picture and how many different people are represented in their photo collection. A sensible approach would be to extract all faces and form groups with similar faces.

Challenges of Unsupervised Learning

The main problem with unsupervised learning is to evaluate if the algorithm has learned anything useful. Usually, unsupervised learning algorithms are applied to unlabeled data, so we do not know what the correct output should look like. That’s why it’s so hard to decide if a model is right. Therefore, unsupervised algorithms are often used in the exploration phase, where a data scientist wants to understand the data better and less as part of a mostly automated system.

Another typical application of unsupervised algorithms is preprocessing for supervised algorithms. A new representation of the data increases the learning accuracy of the monitored algorithm or reduces the memory and time overhead.

There are two types of algorithms commonly used in unsupervised learning:

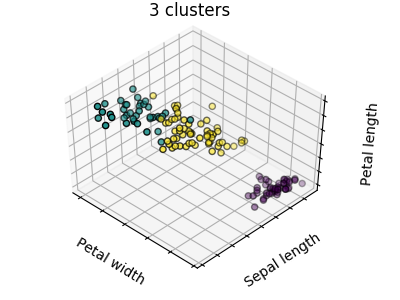

k-Means

A k-means algorithm is a method of vector quantization that is also used for cluster analysis. In this case, a previously known number of k groups is formed from a set of similar objects. The algorithm is one of the most commonly used techniques for grouping objects, as it quickly finds the centers of the clusters. The algorithm prefers groups with low variance and similar size. (Source: Wikipedia)

(3 Cluster – source: scikit-learn)

Apriori

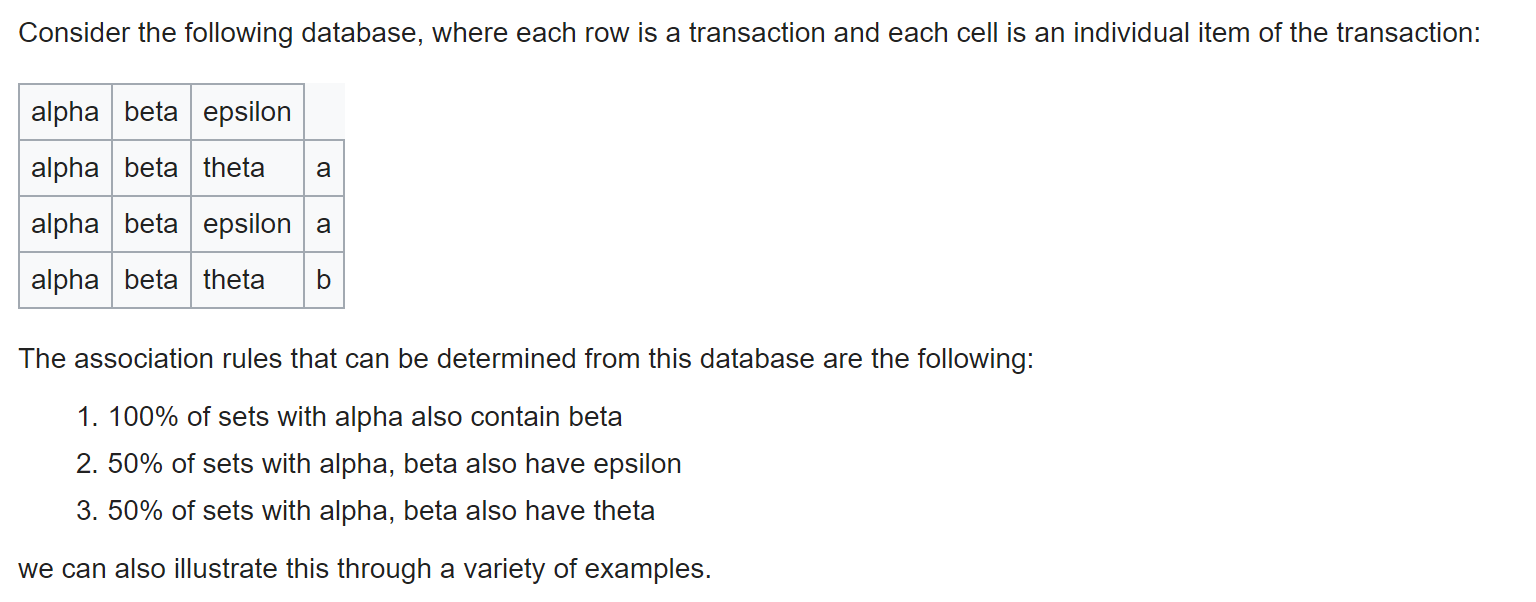

The Apriori algorithm is a method for association analysis, a field of data mining. It serves to find meaningful and useful contexts in transaction-based databases, which are presented in the form of so-called association rules. A typical application of the Apriori algorithm is a shopping basket analysis. Items are products offered here, and purchase represents a transaction that contains the purchased items. The algorithm now determines correlations of the form: “When shampoo and aftershave were bought, shaving cream was also purchased in 90% of the cases.”

(Simple Apriori example– source: Wikipedia)

Summary

Unsupervised Learning can be beneficial if you do not know exactly what to do with the data provided or in which direction the analysis should go. It thus offers the data scientist the opportunity to bring a little light into the dark.

Recommended reading: 5 Variations of Artificial Intelligence